Varigence Blog

BimlFlex 2018 - Integrating with MDS - Load to MDS

BimlFlex 2018 - Integrating with MDS - Load to MDS

This BimlFlex webinar looks at integrating BimlFlex with Microsoft SQL Server MDS and the load process into MDS.

Preparing the MDS Model

The video starts with a creation of a Master Data Services Model for the Product management. The Model contains a Product Entity that in turn contains attributes. The creation of the Entity also creates an MDS staging table in the MDS database. To read data out of MDS a subscription view is created. This view is also used by the load process to check for existing records.

Creating the BimlFlex metadata.

In BimlFlex, the metadata is managed through a central repository. The management of this metadata is done through an Excel front end. This allows for modelling and management of metadata, source to target mappings and more. The example in the video uses a direct connection from source to destination, loading MDS straight from the AdventureWorks LT source system. A common approach is for the MDS loads to be integrated into the Data warehouse loads. Using either a source view on top of the Data Warehouse or a staging table that is part of a source system load as the source for the MDS load.

For the load to MDS the MDS connection is specified as Master Data Services in the IntegrationStage column.

A Batch for the load process is created. In the example it is named LOAD_AWLT_TO_MDS to also illustrate the direction of data.

A Project for the load process is created. In the example it is named LOAD_AWLT_TO_MDS to also illustrate the direction of data. It uses the AdventureWorks connection as the source and the MDS as target. It uses the created Batch for the batch grouping.

For the objects there are 2 tables specified. The source SalesLT.Product table and the MDS staging, destination table stg.Product_Leaf.

The required columns for the destination model is specified to match the entity in MDS and any required transformation or derivations are added. The source columns are mapped to the destination columns.

Build solution in BimlStudio

Once the model is completed it is time to open the solution in BimlStudio and build out all required artefacts. BimlStudio reads all the metadata from the BimlFlex metadata repository and provides generation of an SSIS Project for the MDS load. Once the builds are completed the solution can be opened and run in Visual Studio or it can be passed to a continuous build, continuous deployment pipeline.

Opening the Project in Visual Studio illustrates the load process. The project includes a batch that calls all individual entity loads, in this case the single Product load process. The Product load process loads from the source, does a lookup to check for existing records and loads the data to the MDS staging table. The package then starts the MDS ingestion process that loads the data from the staging table to the entity.

Once the load is completed the loaded data can be reviewed in the model explorer.

Additional steps

For housekeeping and to manage earlier versions of MDS it is possible to create a preprocessing step to truncate all MDS staging tables. For SQL Server 2016 and later this can be automated through MDS. For earlier versions the tables should be maintained so that they don't get filled up with old data.

As a post process it is possible to start the MDS Model validation process. This validates the model and applies any defined business rules.

The model load is now completed. The model is ready for data steward management and the subscription view is ready to be ingested into the data warehouse as a separate source.

Watch BimlFlex 2018 - Integrating with MDS - Load to MDS

BimlFlex 2018 - Dimensions and Facts

BimlFlex 2018 - Dimensions and Facts

This BimlFlex webinar looks at Dimensional Modelling and the Dimensional data warehouse.

The video starts with a short overview of the Dimensional Modelling approach. This highlights that the dimensional warehouse is targeted for, and optimized for, end user querying. It uses dimensions to provide context and facts and measures to provide analytical measures. The dimensional warehouse is denormalised and optimized for analysis, in contrast to 3NF or Data Vault modelling approaches, who are more optimized towards data warehousing. Most classical BI analysis and reporting tools are geared towards easily reading the data from a dimensional model.

Choosing the architecture

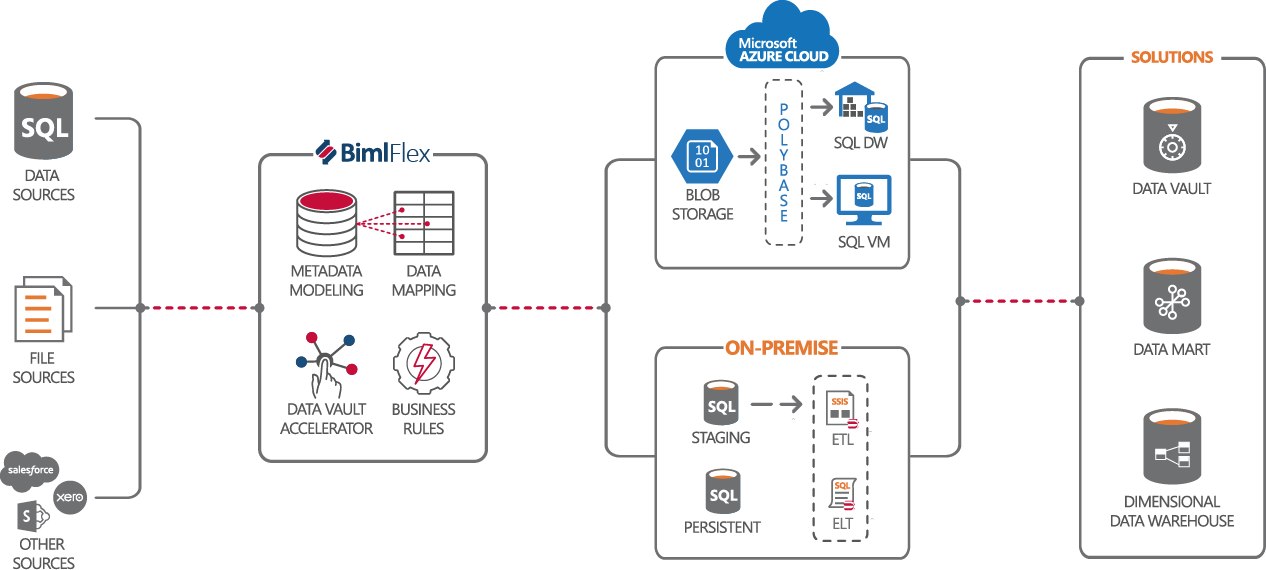

In the BimlFlex architecture it is possible to chose between using Microsoft Azure cloud based data warehousing or on-premises data warehousing for SQL Server. The Microsoft Azure Cloud based approach uses Polybase and blob storage of files as the ingestion mechanism for loads. This uses an ELT approach to load the data into the data warehouse. The data can be extracted using SSIS from traditional source. The data warehousing solution ban be either Azure SQL Data Warehouse or SQL Server 2016 and newer, commonly running in a VM in Azure. The on-premises approach uses either traditional loads through an SSIS based ETL process or a ELT based process into a classical installation of SQL Server.

Regardless of the ultimate destination, the BimlFlex modelling approach and the required metadata is the same and it is possible to easily migrate a solution from an on-premises approach to a cloud based approach. For organizations reviewing the cloud as the future platform for data warehousing this means it is possible to use the on-premises approach today and move to the cloud down the line without having to recreate the data warehousing solution.

In BimlFlex, the metadata is managed through a central repository. The management of this metadata is done through an Excel front end. This allows for modelling and management of metadata, source to target mappings and more.

Importing source metadata

The dimensional model approach uses a direct source to target load model. It connects to and reads the data from a source or a prepared layer out of a data warehouse to populate the facts and dimensions. In this demo we connect to and read the metadata out of a set of views that has been prepared in the WideWorldImporters source system to represent the source for the dimensional data warehouse.

Model the dimensional data warehouse

For imported metadata objects, it is possible to use the BimlFlex cloning function to create the destination dimension and facts. For identity-based surrogate keys in the data warehouse the cloning mechanism can add a column to use so that the end result directly follow the classical dimensional model. The cloning creates the full target structure as well as the source to target mappings for all source columns.

Once the metadata is imported and all destinations are created it is time to define the relationships between the Facts and Dimensions. These references allows BimlFlex to create the lookups from the fact load to the connected dimensions.

For dimensional attributes it is also possible to define the change types. each dimensional attribute column can be defined as either Type 1 or Type 2. This allows BimlFlex to create either pure Type 1 dimensions, pure type 2 dimensions or mixed, type 6, dimensions.

Build solution in BimlStudio

Once the model is completed it is time to open the solution in BimlStudio and build out all required artefacts. BimlStudio reads all the metadata from the BimlFlex metadata repository and provides generation of all SQL scripts as well as SSIS and SSDT Project generation for all parts of the data warehousing solution. Once the builds are completed the solution can be opened and run in Visual Studio or it can be passed to a continuous build, continuous deployment pipeline.

Using On-premises SQL Server

For an on-premises solution the generated artefacts will be SQL table scripts and projects for the database and SSIS Projects with data flows for loading the data from the source database views to the data warehouse.

Using Cloud based Azure SQL Data Warehouse

To repoint the data warehouse to use an Azure SQL Data Warehouse, the only change needed is:

- Update the

ConnectionStringto point to the Azure SQL Data Warehouse - Update the Connection Type to

OLEDB SQL Based ELTrather than the on-premises use ofOLEDB - Update the System Type to

Azure SQLDW.

For Azure SQL Data Warehouse there are additional features available, such as splitting and compressing the export files for optimized load across the chosen data warehouse configuration.

Once the changes are done, the BimlStudio project will provide all required artefacts for implementing the data warehouse on Azure SQL Data warehouse. This includes packages for extracting data from the source database into flat file, the mechanism to move them to Azure Blob Storage, the External Table definitions to expose the data from the files to the data warehouse and the ELT load Stored Procedures that loads the facts and dimensions with the data from the files. For convenience and orchestration help there are also an SSIS project with a package that calls all the load Stored Procedures in the right order.